Zen 2 comparison of monolithic 4650G versus chiplet 3500X

Ryzen rise on the desktop is really well benchmarked and tested. We know how it scales with memory and so on. But what about mobile parts? How much does the loose stick at 3200CL22 JEDEC RAM? Are there any other less obvious compromises that affect performance? Let's take a look.

This article is a comparison of mobile APU design (Ryzen 4650G) versus desktop chiplet Ryzen 3500X. Even though 4650G should perform a bit better than 3500X there is an unexpected performance drop in relatively lightweight gaming with a dedicated GPU - I'll go over various aspects of the system to see what's causing this.

If you want a quick overview of how it performs you can check my World of Warcraft benchmarks as well.

Intro

When Intel launched Tiger Lake they did use LPDDR4X advantage to beat Ryzen 4800U using standard 3200CL22 SO-DIMM. Aside from iGPU performance, it affected some synthetic benchmarks focused on memory.

15W U-series Zen 2 chips like the 4800U downloclock RAM to 2666MHz when they encounter a CPU-only

workload to favor lower latencies. Full RAM speed is used when a heavy GPU workload is detected. Tiger Lake doesn't do that so with LPDDR4X it offers much higher memory throughput and high gains in some synthetic benchmarks. Such benchmarks don't mimic real-world usage really well but can showcase such less-known characteristics of a mobile APU that can come back in some scenarios…

Such less-known features and behaviors of mobile parts can cause confusion. PC builders know they can’t really go beyond 2000 MHz for Infinity Fabric on a Zen 2 or Zen 3 CPU but with Zen 2 APU, you can go way beyond that which could be utilized for even better performance.

We also know that Zen 3 is really strong in gaming, but if you move to laptops the results can be quite different:

A Zen 3 latest mobile CPU is a bit slower than old

14nm Intel CPU? How come? It’s not a direct CPU-to-CPU comparison due to different laptops, yet such dark corners

of benchmarking and analysis of the mobile parts still persist.

Test setup and initial comparison

I've decided to compare desktop Ryzen 3500X Matisse CPU with Ryzen 4650G - a Renoir Zen2 APU. How do they behave in CPU workloads (taking into account their core/clock differences) and how they will perform in gaming with a dGPU? Can you use an APU right now for a solid PC that later would get only dGPU without the need to upgrade the CPU?

I've used Gigabyte B450M DS3H and Asrock A520M-HDV motherboards with multiple kits of memory, all described in my DIMM vs SO-DIMM benchmarks.

| Ryzen 3500X Matisse | Ryzen 4650G Renoir | |

|---|---|---|

| Core configuration | 6C/6T | 6C/12T |

| Base/Boost Clock | 3.6 GHz / 4.1 GHz | 3.7 GHz / 4.2 GHz |

| L1 / L2 / L3 cache | 384KB / 3MB / 32MB | 384KB / 3MB / 8MB |

| TDP | 65W | 65W |

| Floorplan / die shot | wikichip.org | guru3d.com |

| dGPU | GTX 1070 | |

3500X has more L3 cache but 100MHz lower frequency and doesn't have SMT. In synthetic benchmarks that use SMT this should show up:

Everything seems in order. A slightly higher clocked 4650G wins a bit in single-core and more in multi-core due to SMT. 8/16 4800U tops the multi-core Cinebench run even while being low power chip.

If we go to some simple CPU benchmarks like say Geekbench 5 the trend will continue:

In the single-core clock difference is quite small and the load isn't long-lasting so both CPUs end up nearly equal. In multi-core 6/12 wins with 6/6, especially if you are working with some archives and like plus 4650G has lower latencies which should boost some Geekbench scores.

Also as you can see single channel of 1x8GB RAM is much slower than dual-channel configurations. The 4-stick configuration for bank weaving (all sticks are single rank) doesn't do much for Zen 2 (for Zen 3 the effect of bank or rank weaving is bigger).

Ok, lets move to some gaming

benchmarks, like say 3DMark benchmarks?

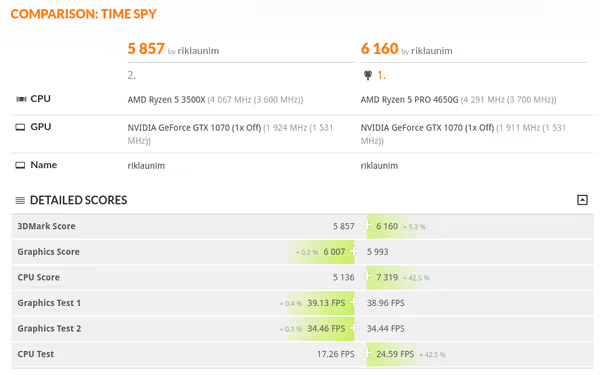

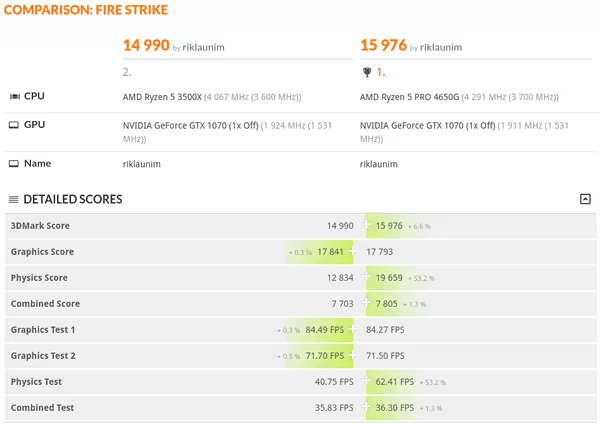

So it looks like 4650G is slightly better than 3500X aside from single-channel and low-end SODIMM configuration? But wait - 3DMark scores consist of GPU and CPU benchmarks:

6/12 low latency wins with 6/6 higher latency which shouldn't be a surprise. But if the CPU score is so high, what are the GPU scores like?

Welcome to our investigation. Why does 4650G has a lower GPU score than 3500X? Is it because of PCIe lane differences? And why does it scale with memory so much?

And if we look at the framerate in World of Warcraft with the help of MSI Afterburner we get this:

Both CPUs reach their target frequencies, temperatures are well below high

and all seems to be working fine.

Is it the PCIe lanes difference?

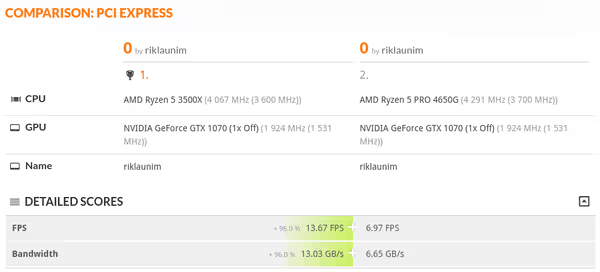

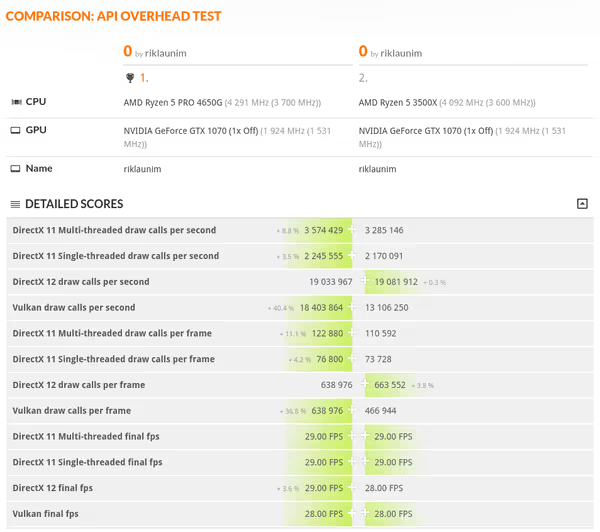

3500X runs the x16 slot at x16 PCIe 3.0. The 4650G should offer x16 for the GPU but if the motherboard uses any extra PCIe lanes for I/O it will run the same x16 slot at x8 PCIe 3.0 speed. If you run 3DMark PCIe bandwidth benchmarks it will be super clear:

3500X has double the PCIe bandwidth which also allows it to spam more draw calls for DX12 and Vulkan which are parallelized enough to hit the PCIe bandwidth limit in a synthetic benchmark. DX11 is mostly single threaded so the 4650G with stronger single-core clocks wins by a bit.

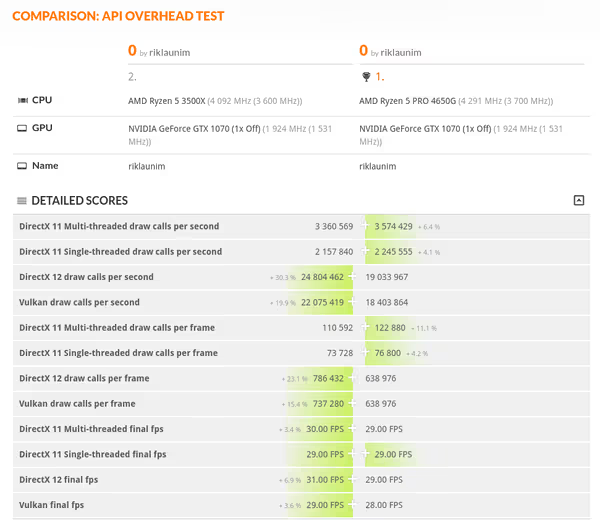

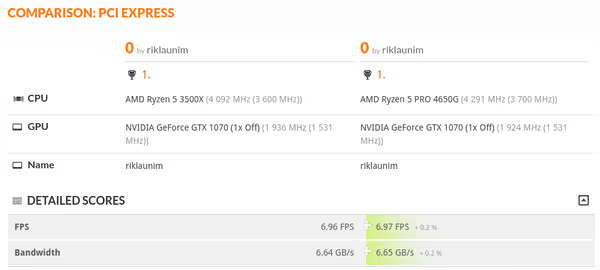

If I reduce the PCIe version to 2.0 for 3500X (x16 2.0 is equal to x8 3.0) we get this:

So we have a tie on PCIe bandwidth as expected. 3500X still wins in DX12 by a very small margin, Vulkan goes crazy in favor of 4650G and for DX11 it has a ~10% lead.

Does it affect games? - no, not really:

| 3500X x16 PCIe 3.0 | 3500X x16 PCIe 2.0 | 4650G x8 PCIe 3.0 | |

|---|---|---|---|

| Superposition | 25492 | 25677 | 21400 |

| Unigine Valley | 7349 | 7294 | 5986 |

| Night Raid GPU score | 77 166 | 76 977 | 74 488 |

Shouldn't Renoir have 16 + 4 + 4 PCIe configuration?

4650G should offer x4 PCIe for NVMe SSD, second x4 for chipset and then x16 for the GPU. The problem will be when the motherboard uses some extra lanes for additional SATA controllers or other I/O. The Renoir will be at a disadvantage and the lanes for the GPU will drop to x8 - this is motherboard-dependent... Matisse has the same amount of lanes but PCIe 4.0 while Renoir 3.0. The lack of x16 to the GPU could be also due to some weird config in the BIOS as Gigabyte B450M DS3H doesn't explicitly use extra PCIe lanes for anything special.

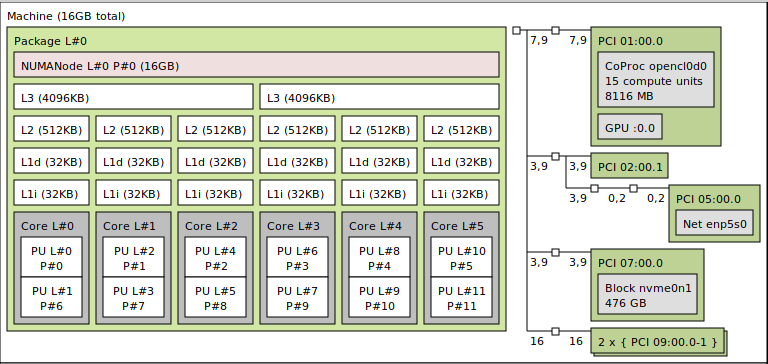

SoC design

Desktop Matisse and mobile Renoir use different designs. 4650G even as a desktop CPU is based on a mobile monolithic design. 3500X consists of an I/O die (GlobalFoundries 12nm) and a Core Complex Die (TSMC 7nm) that consists of up to two CPU Complexes. Each complex can have up to 4 cores, 8 per CCD. Both complexes are connected via interconnect and as a CCD they are connected to the I/O die and then handle RAM and other peripherals.

Renoir is a single chip consisting of one CCD (so up to 8 cores) with Core complexes similar to Matisse but with only 4MB of L3 cache instead of 16MB. As there is no separate I/O die, no additional CCD and distances are much shorter and all on-die the latency of internal communication is much lower. Also, the memory controller supports much higher frequencies under a 1:1 ratio with Infinity Fabric clock - meaning you can use an extreme kit of DDR4 memory with APU but not necessarily with the Zen 2 or Zen 3 chiplet-based CPUs.

Latency was one key aspect in which Intel CPUs excelled which allowed them to retain the king of gaming

crown, especially in latency-sensitive games. With Zen 3 it's improved but with monolithic APU designs the differences should be even smaller if not lower on some extreme kits of memory.

If we look at 3DMark Time Spy and Firestrike results comparisons we can see how 4650G annihilates 3500X in CPU-based benchmarks while still losing by a bit in GPU ones:

This is lower latency of internal communication (and sometimes SMT) more so than +100MHz to the clocks.

Monolithic vs chiplet memory controller differences?

If the monolithic design has improved latencies and wider Infinity Fabric link we should see that in Aida64 benchmarks (A520 3600CL16):

As we can see 4650G has a bit lower latencies and higher cache throughput. The L3 results are a bit weird but if we look at some lower RAM frequencies (B450) we get more consistent results like look like so:

Renoir can dynamically manage the IF link, throttling it if needed so that's why some results from higher frequency memory may be atypical on some systems.

Are we trolled by pro features?

As standard APUs (4600G, 4700G) are not available to end users but 4650G and 4750G are we get pro

management and security features that big companies, governments, or Arasaka want. One such feature is TSME (which is not TSMC's alter ego). TSME is physical memory encryption and when enabled it will impact memory-related performance. For normal PCs, this should be disabled. On the Gigabyte B450M DS3H, I had to look deep inside the menus to find it and disable it - it even didn't have a tooltip string.

After disabling TSME I rerun some of the benchmarks:

- Superposition: 22 295 (from 21 400; 3500X at 25 492)

- Unigine Valley: 6071 (from 5986; 3500X at 7349)

- Night Raid: 39 455 (from 38 945; 3500X at 37 822)

- Night Raid GPU: 72 635 (from 71 120; 3500X at 77 101)

- World of Warcraft (Stonard): 230 FPS (from 229,3 FPS; 3500X at 280,4 FPS)

- PCMark 10: 6036 (from 5851)

- Memory latency: 65,3 ns (from 72,1 ns; 3200 16-15-15-35; 3500X at 75,8 ns)

There is some improvement, especially on the CPU compute side, but it's nowhere near 3500X in lightweight gaming performance.

Is it a Windows problem?

Using Ubuntu 21.04 and Phoronix benchmarks I run a set of various gaming and compute/memory related benchmarks. Here are some of the compute ones:

As expected 4650G is better in those scenarios. When we switch to gaming we get this:

We get the same pattern - in gaming with dGPU 3500X wins. Also, SMT is not the cause of the problem - when disabled in the BIOS it doesn't improve the results.

What are our other options?

Right now I don't know what can be causing this performance disparity. Any ideas? Is it just because 4650G has less Game Cache a.k.a. L3 cache? ;)

How high can you go with RAM on Renoir?

guru3d.com went up to 4533CL16 1:1 in their benchmarks on a premium motherboard so you can check that out - for iGPU, dGPU, and CPU compute performance.

Ryzen APUs in pro and non-pro variants are available in pre-build nettops and small PCs. Most of the small ones will use SO-DIMM memory sticks limiting you to pretty much 3200CL22. Note that better

SO-DIMM kits you may find use 1.35V and the given device is likely not compatible with 1.35V SO-DIMMs – so if you got one check it manual and specs for this before you buy such RAM sticks.

If you are building an APU system to later add a dGPU you can use a good motherboard with a more premium set of memory to push most of the APU in both configurations. Note that when you stress

the memory controller with the higher frequency it will generate more heat so to not lose on CPU clocks you will have to cool the APU really well.

Where to get an AMD APU?

Zen 3 APUs have been launched for OEMs so pre-build systems should show up soon from the time of writing this article. Zen 2 APUs are available more or less. Non-pro 4600G or 4700G are only in pre-build systems - small nettops or PCs. Pro variants are in a bit more expensive pre-builds but some stores order trays of those CPUs and sell them to end users. Check your local stores for 4650G. The 4750G is an 8/16 part with Vega 8 instead of Vega 7 but the price is close to double of 4650G so I would say it’s not the best value right now. Aliexpress offers them as well while some also got them locally from Amazon.

Summary

Right now when dGPU, even the placeholder

ones are hard to get in sane prices an APU PC intended for dGPU upgrade later on could be a really good and handy build.

There is some difference in light gaming benchmarks but the difference isn't large and for more complex games it may be completely overshadowed by the better CPU performance or just GPU bottleneck when running heavier games.

If I get any tips or ideas on what could cause this performance disparity I'll update the article and add corresponding benchmarks.

Comment article