OCuLink and USB4 eGPU with Nvidia cards on GPD Win Max 2

After testing Intel ARC and Radeon RDNA cards as external graphics cards it's time to look at Nvidia. The cards do work over OCuLink, but they do require running a special script fixing Error 43

. Thunderbolt 3/USB4 isn't a problem either although in some cases the performance will be lower.

Nvidia graphics cards over OCuLink setup

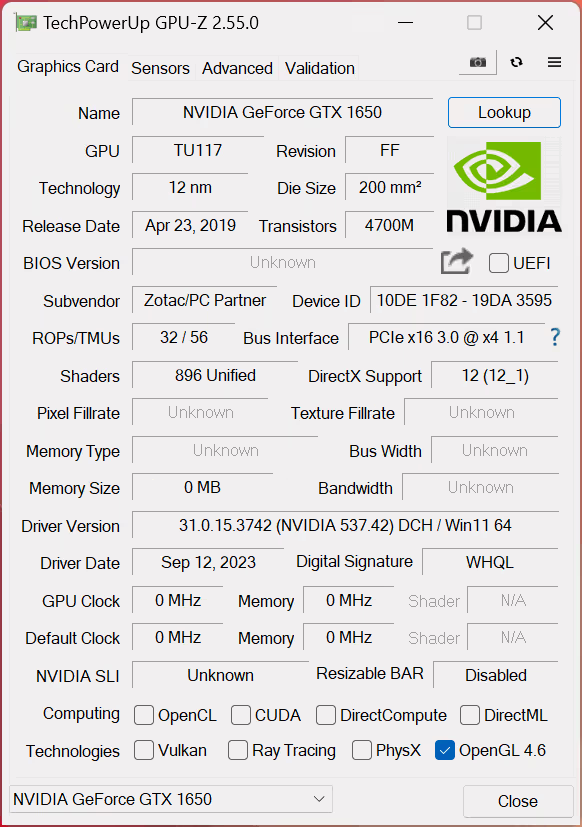

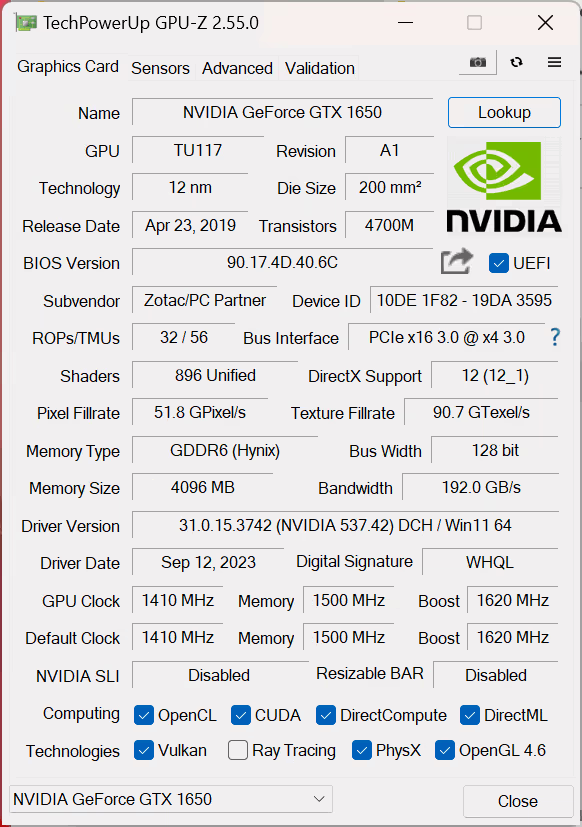

Install your graphics card in the OCuLink adapter board, connect it to the GPD Win Max 2 or other compatible device, and boot the device. By default, the GPU will be present in the device manager, but you will be unable to use it.

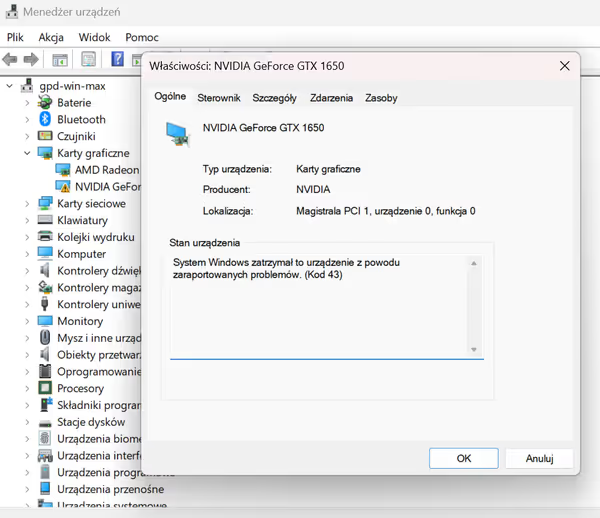

If you didn't install the drivers yet, you can do it now. Reboot the system and then you should get Error 43 on the device:

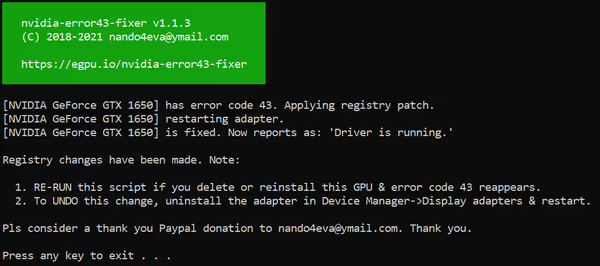

To fix this error and enable the graphics card you have to run nvidia-error43-fixer script.

If you change the GPU or the settings get lost, and you would get a boot with an unusable GPU state again just reboot to get Error 43 and run the script again.

Nvidia eGPU benchmarks

For the tests I used GPD Win Max 2 laptop with Ryzen 7840U and 32GB 7500MT/s RAM. For comparison, I also added Intel Xe graphics results from HP 15-dw3000ni laptop (1165G7 Tiger Lake, 2x8GB 3200CL22 RAM). The desktop is a Ryzen 5900X with 4x8GB 3800CL16 RAM.

Thunderbolt 3 / USB4 was using PCIe x4 3.0. OCuLink is PCIe 4.0 but GPD Win Max 2 defaults to PCIe 3.0, and I used that for testing. The laptop can be forced into PCIe 4.0 connection, but it then disabled CPU clock boost which hits the overall performance. Still looking into specifics of this behavior.

Pure GPU benchmarks show good scaling over GTX 1650, RTX 3050 and RTX 3070. FFXIV Endwalker is hitting a CPU bottleneck with the low-power Ryzen, yet even basic eGPU is capable of greatly improving the performance, which can be attributed to for example much greater pixel fill rate (needs confirmation, additional testing).

OCuLink vs Thunderbolt 3

Thunderbolt 3 carries PCIe connectivity but also as a connection protocol is managed by the CPU. This causes some performance hits when the CPU has to handle a lot of other I/O in the system. OCuLink is pure PCIe connectivity and the CPU isn't involved in any additional tasks.

With eGPU setups you can use your laptop screen (internal configuration) which uses part of the available bandwidth to send the data back to it. If you connect an external display to the eGPU directly then there is no such need which can improve performance if its throughput is limited.

Using a laptop screen slightly lowers the performance. Thunderbolt 3 in those synthetic benchmarks and FFXIV is only slightly lower. However, there are other cases.

Night Raid for Intel/AMD/Nvidia cards shows a big performance drop when the internal screen is being used. Could be some latency or timing issue.

Cyberpunk 2077 is using the CPU a lot which causes a big performance loss for Thunderbolt. Stronger Ryzen 5900X and x16 PCIe connection instead of x4 allows it to pull around 25% more performance.

eGPU Laptop vs Desktop

As seen above Cyberpunk 2077 gets around 25% more performance on desktop. CPU-bound FFXIV gets even more, while x16 versus x4 PCIe connection gives very similar Time Spy GPU scores and much better Night Raid GPU scores:

OCuLink PCIe 3.0 vs 4.0 for GPD Win Max 2

RTX 3070 connected via OCuLink x4 PCIe 3.0 reaches 3,36GB/s in 3DMark PCIe speed test. Switching OCuLink to PCIe 4.0 pushes the throughput to 6,71 GB/s. As GPD Win Max 2 disables CPU turbo it makes benchmark comparison impossible. All CPU and game benchmark scores decrease due to the CPU running at around 3,3GHz instead of boosting to around 5GHz.

GPD commented on the issue, and there is some instability when the CPU is at high load and when OCuLink is working at PCIe 4.0. They are talking with AMD about this. At the time of writing this article, no more information is given. Users also used the 2230 SSD slot with an OCuLink adapter and that worked without problems at 4.0 and max CPU clocks, so it may be something related to which PCIe lanes are used by the external OCuLink connector and the M.2 slot.

Conclusions

The eGPU setup worked well although it's not mass consumer ready

yet. It does require manual setup and running a magic script. egpu.io community recommends using OSMETA GK01 adapter board which also has some additional power-on management options for less compatible systems.

Intel and AMD are increasing the iGPU size for 2024 and future products. The key question would be how they will manage memory for them. Ryzen 7840U with the fastest LPDDR5x 7500MT/s it can handle reaches 76% of GTX 1650 score in Time Spy GPU score but only 43% for FFXIV Endwalker (some of that could be the SoC power budget diverted fully to the CPU). And don't forget Kaby Lake G where GPU and CPU cannibalized each other thermals.

Comment article